Application Sandboxing, Signing, and Permissions

Mobile

devices have become similar to PCs, where it’s almost less about the

underlying operating system and more about the applications running on

them. For example, the iPhone is a great product, but the applications

that run on top of the iPhone OS bring it a significant amount of appeal

as well. Similar to the desktop world, if applications are not under

tight security controls, they could do more damage than good.

Furthermore, as security controls get tighter and tighter on operating

systems, attackers are more likely to develop hostile applications that

entice users to download/install them (also known as malware) than to

try to find a vulnerability in the operating system itself. In order to

ensure applications are only allowed access to what they need, in terms

of the core OS, and to ensure they are actually vetted before being

presented to the mobile user for download, application sandboxing and

signing are two important items for mobile operating systems. This

section covers some of the security features available on mobile

operating systems to protect applications from each other as well as the

underlying OS, including application sandboxes, application signing,

and application permissions.

Application Sandboxing

Isolating mobile applications

into a sandbox provides many benefits, not only for security but also

stability. Mobile applications might be written by a large organization

with a proper security SDL (software development life cycle) or they

might be written by a few people in their spare time. It is impossible

to vet each different application before it lands on your mobile phone,

so to keep the OS clean and safe, it is better to isolate the

applications from each other than to assume they will play nice. In

addition to isolation, limiting the application’s calls into the core OS

is also important. In general, the application should only have access

to the core OS in controlled and required areas, not the entire OS by

default. For example, in Windows Vista, Internet Explorer (IE) calls to

the operating system are very limited, unlike previous versions of IE

and Windows XP. In the old world, web applications could break out of IE

and access the operating system for whatever purpose, which became a

key attack vector for malware. Under Vista and IE7 Protected Mode,

access to the core operating system is very limited, with only access to

certain directories deemed “untrusted” by the rest of the OS. Overall,

the primary goals of application sandboxing are to ensure one

application is protected from another (for example, your PayPal

application from the malware you just downloaded), to protect the

underlying OS from the application (both for security and stability

reasons), and to ensure one bad application is isolated from the good

ones.

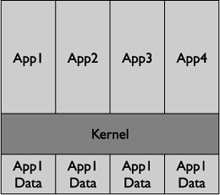

All mobile operating

systems have implemented some form of application isolation, but in

different forms. The newer model of application sandboxing gives each

application its own unique identity. Any data, process, or permission

associated with the application remains glued to the identity, reducing

the amount of sharing across the core OS. For example, the data, files,

and folders assigned to a certain application identity would not have

access to any data, file, and folders assigned to another application’s

identity (see Figure 1).

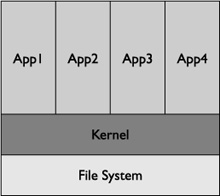

The traditional model uses

Normal and Privileged assignments, where certain applications have

access to everything on the device, and Normal applications have access

to the same entities on the device. For example, this model would

prevent Normal applications from accessing parts of the file system that

are set aside for Privileged applications; however, all Normal

applications would have access to the same set of files/folders on the

device (see Figure 2).

The

next two subsections provide a short summary of how the different

mobile operating systems measure up in terms of application sandboxing. Also, much of this information comes

from Chris Clark’s research on mobile application security, presented

at the RSA Conference

(https://365.rsaconference.com/blogs/podcast_series_rsa_conference_2009/2009/03/31/christopher-clark-and-301-mobile-application-security—why-is-it-so-hard).

Windows Mobile/BlackBerry OS

BlackBerry devices and Windows

Mobile both use the traditional model for application sandboxing. For

example, Blackberry uses Normal and Untrusted roles, whereas Windows

Mobile uses Normal, Privileged, and Blocked. On Windows Mobile,

Privileged applications have full access to the entire device and its

data, processes, APIs, and file/folders, as well as write access to the

entire registry. Normal applications have access to only parts of the

file system, but all the Normal applications have access to the same

subset of the operating systems. It should be noted although one Normal

application can access the same part of the file system as another

Normal application, it cannot directly read or write to the other

application’s process memory. Blocked applications are basically null,

where they are not allowed to run at all.

So how does an

application become a Privileged application? Through application

signing, which is discussed in the “Application Signing” section. On

Windows Mobile, the certificate used to sign the application determines

whether the application is running in Normal mode or Privileged mode. If

you want your application to run as Privileged instead of Normal, you

have to go through a more detailed process from the service provider

signing your applications.

iPhone/Android

Both the iPhone and Android

use a newer sandboxing model where application roles are attached to

file permissions, data, and processes. For example, Android assigns each

application a unique ID, which is isolated from other applications by

default. The isolation keeps the application’s data and processes away

from another application’s data and processes.

Application Signing

Application signing is simply a

vetting process in order to provide users some level of assurance

concerning the application. It serves to associate authorship and

privileges to an application, but should not be thought of as a measure

of the security of the application or its code. For example, for an

application to have full access to

a device, it would need the appropriate signature. Also, if an

application is not signed, it would have a much reduced amount of

privileges and couldn’t be widely disturbed through the various

application stores of the mobile devices—and in some mobile operating

systems, it would have no privileges/distribution at all. Basically,

depending on whether or not the application is signed, and what type of

certificate is used, different privileges are granted on the OS. It

should be noted that receiving a “privileged” certificate versus a

“normal” certificate has little to do with technical items, but rather

legal items. In terms of getting a signed certificate, you have a few

choices, including Mobile2Market, Symbian Signed, VeriSign, Geotrust,

and Thawte. The process of getting a certificate from each of the

providers is a bit different, but they all following these general

guidelines:

Purchase a certificate from a Certificate Authorities (CA), and identify your organization to the CA.

Sign your application using the certificate purchased in step 1.

Send the signed application to the CA, which then verifies the organization signature on your application.

The CA then replaces your user-signed certificate in step 1 with its CA-signed certificate.

If you wish your mobile

application to run with Privileged access on the Windows Mobile OS,

your organization will still have to conform to the technical

requirements listed at http://blogs.msdn.com/windowsmobile/articles/248967.aspx,

which includes certain do’s and don’ts for the registry and APIs. The

sticking point is actually agreeing to be legally liable if you break

the technical agreements (and being willing to soak up the financial

consequences).

The impact to the security

world is pretty straightforward, so as to separate the malware

applications from legitimate ones. The assumption is that a malware

author would not be able to bypass the appropriate levels of controls by

a signing authority to get privileged level access or distribution

level access to the OS, or even basic level access in some devices.

Furthermore, if that were to happen, the application sandbox controls,

described previously, would further block the application. A good

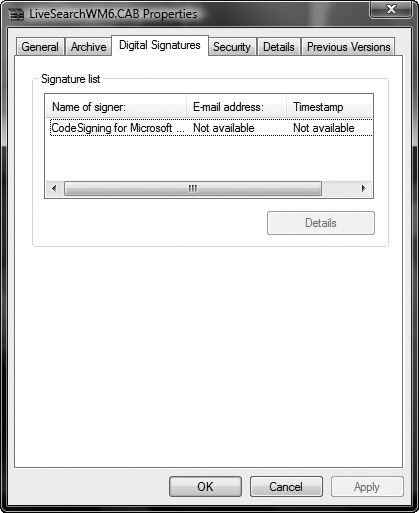

example of the visual distinction of applications that are signed from

applications that are not signed is shown in Figure 3, which shows a signed CAB file on Windows Vista (right-click the CAB file and select Properties).

In terms of the major mobile

operating systems, most, if not all, require some sort of signing. For

example, both BlackBerry and Windows Mobile requiring signing via CAs,

although both allow unsigned code to run on the device (but with low

privileges).

Furthermore,

the iPhone and Android require application signing as well, both of

which are attached to their respective application stores. Specifically,

any application distributed via the application store would have be

signed first; however, Android allows self-signed certificates whereas

the iPhone does not.

Permissions

File permissions on

mobile devices have a different meaning than in regular operating

systems, because there’s really no idea of multiple roles on a mobile

operating system. On a mobile operating system, file permissions are

more for applications, ensuring they only have access to their own

files/folders and no or limited access to another application’s data. On

most mobile operating systems, including Windows Mobile and the Apple

iPhone, the permission model closely follows the application sandboxing

architecture. For example, on the iPhone, each application has access to

only its own files and services, thus preventing it from accessing

another application’s files and services. On the other hand, Windows

Mobile uses the Privileged, Normal, and Blocked categories, where

Privileged applications can access a file or any part of the file

system. Applications in Normal mode

cannot access restricted parts of the file system, but they all can

access the nonrestricted parts collectively. Similar to the iPhone,

Android has a very fine-grained permission model. Each application is

assigned a UID, similar to the UID in the Unix world, and that UID can

only access files and folders that belong to it, nothing else (by

default). Applications installed on Android will always run as their

given UID on a particular device, and the UID of an application will be

used to prevent its data from being shared with other applications.

Table 1,

created by Alex Stamos and Chris Clark of iSEC Partners, shows the

high-level permission model for applications installed on the major

platforms for critical parts of the mobile device.

Table 1. Security Permissions Summary

| Data Type | BlackBerry | Windows Mobile 6 | Apple iPhone 2.2.1 | Google Android |

|---|

| E-mail | Privileged | Normal | None | Permission |

| SMS | Privileged | Normal | None | Permission |

| Photos | Privileged | Normal | UIImagePicker Controller | Permission |

| Location | Privileged | Normal | First Use, Prompts User | Permission |

| Call history | Privileged | Normal | None | Permission |

| Secure Digital (SD) cards | Privileged | Normal | N/A | Permission |

| Access network | Privileged | Normal | Normal | Permission |

Buffer Overflow Protection

The final category we’ll

discuss is protection against buffer overflows. Before cross-site

scripting dominated the security conversation, buffer overflows were the

main attack class every security person worried about. A tremendous

amount of good resources for learning about buffer overflows exists.

Refer to the following link to get started: http://en.wikipedia.org/wiki/Buffer_overflow.

If an operating system is

written in C, Objective-C, or C++, buffer overflows are a major attack

class that needs to be addressed. In the case of major mobile operating

systems, both Windows Mobile (C, C++, or .NET) and the iPhone

(Objective-C) utilize these languages.

The

result of a buffer overflow vulnerability is usually remote root access

to the system or a process crash, either of which is bad for mobile

operating environments. Furthermore, buffer overflows have created

serious havoc on commercial-grade operating systems such as Windows

2000/NT/XP; therefore, it is imperative to avoid any similar experiences

on newly created mobile operating systems (where most are based on

existing operating systems). The main focus of this section is to

describe which mobile operating systems have inherited protection from

buffer overviews. Because buffer overflows are not a new attack class,

but rather a dated one that affects systems written in C or C++, several

years have been devoted to creating mitigations to help protect

programs and operating systems. The following subsections describe how

each major platform mitigates against buffer overflows.

Windows Mobile

Windows Mobile uses the /GS

flag to mitigate buffer overflows. The /GS flag is the buffer overflow

check in Visual Studio. It should not be used as a complete foolproof

solution to find all buffer overflows in code—nor should anything be

used in that fashion. Rather, it’s an easy tool for developers to use

while they are compiling their code. In fact, code that has buffer

overflows in it will not compile when the /GS flag is enabled. The

following description of the /GS flag comes from the MSDN site:

“[It] detects some

buffer overruns that overwrite the return address, a common technique

for exploiting code that does not enforce buffer size restrictions. This

is achieved by injecting security checks into the compiled code.”

So what does the /GS flag actually do? It focuses on stack-based buffer overflows (not the heap) using the following guidelines:

Detect buffer overruns on the return address.

Protect against known vulnerable C and C++ code used in a function.

Require

the initialization of the security cookie. The security cookie is put

on the stack and then compared to the stack upon exit. If any difference

between the security cookie and what is on stack is detected, the

program is terminated immediately.

iPhone

The

iPhone OS mitigates buffer overflows by making the stack and heap on

the OS nonexecutable. This means that any attempt to execute code on the

stack or heap will not be successful, but rather cause an exception in

the program itself. Because most malicious attacks rely on executing

code in memory, traditional attacks using buffer overflows usually fail.

The implementation of stack-based

protection on the iPhone OS is performed using the NX Bit (also known

as the No eXecute bit). The NX bit simply marks certain areas of memory

as nonexecutable, preventing the process from executing any code in

those marked areas. Similar to the /GS flag on Windows Mobile, the NX

bit should not be seen as a replacement for writing secure code, but

rather as a mitigation step to help prevent buffer overflow attacks on

the iPhone OS.

Android

Google’s Android OS

mitigates buffer overflow attacks by leveraging the use of ProPolice,

OpenBSD malloc/calloc, and the safe_iop function. ProPolice is a stack

smasher protector for C and C++, using gcc. The idea behind ProPolice is

to protect applications by preventing the ability to manipulate the

stack. Also, because protecting against heap-based buffer overflows is

difficult with ProPolice, the use of OpenBSD’s malloc and calloc

functions provides additional protection. For example, OpenBSD’s malloc

makes performing heap overflows more difficult.

In addition to ProPolice

and the use of OpenBSD’s malloc/calloc, Android uses the safe-iop

library, written by Will Drewry. More information can be found at http://code.google.com/p/safe-iop/. Basically, safe-iop provides functions to perform safe integer operations on the Android platform.

Overall, Android uses a few

items to help protect from buffer overflows. As always, none of the

solutions is foolproof or perfect, but each offers some sort of

protection from both stack-based and heap-based buffer overflow attacks.

BlackBerry

Buffer overflow

protection on the BlackBerry OS isn’t relevant because the OS is built

heavily on Java (J2ME+), where the buffer overflow attack class does not

apply. As noted earlier, buffer overflows are an attack class that

targets C, Objective-C, or C++. The BlackBerry OS, however, is mainly

written in Java. (It should be noted that parts of the BlackBerry OS are

not written in Java.) More information about BlackBerry’s use of Java can be found at http://developers.sun.com/mobility/midp/articles/blackberrydev/.