In the world of expressions, layer space transforms

are indispensible, but they present some of the most difficult concepts

to grasp. There are three coordinate systems in After Effects, and layer

space transforms provide you with the tools you need to translate

locations from one coordinate system to another.

One coordinate system represents a layer’s own space. This is the coordinate system relative (usually) to the layer’s upper-left corner. In this coordinate system, [0, 0] represents a layer’s upper-left corner, [width, height] represents the lower-right corner, and [width, height]/2

represents the center of the layer. Note that unless you move a layer’s

anchor point, it, too, will usually represent the center of the layer

in the layer’s coordinate system.

The second coordinate system represents world space. World coordinates are relative to [0, 0, 0]

of the composition. This starts out at the upper-left corner of a newly

created composition, but it can end up anywhere relative to the comp

view if the comp has a camera and the camera has been moved, rotated, or

zoomed.

The last coordinate system represents comp space. In this coordinate system, [0, 0]

represents the upper-left corner of the camera view (or the default

comp view if there is no camera), no matter where the camera is located

or how it is oriented. In this coordinate system, the lower-right corner

of the camera view is given by [thisComp.width, thisComp.height].

In comp space, the Z coordinate really doesn’t have much meaning

because you’re only concerned with the flat representation of the camera

view (Figure 1).

So

when would you use layer space transforms? One of the most common uses

is probably to provide the world coordinates of a layer that is the

child of another layer. When you make a layer the child of another

layer, the child layer’s Position value changes from the world space

coordinate system to layer space of the parent layer. That is, the child

layer’s Position becomes the distance of its anchor point from the

parent layer’s upper-left corner. So a child layer’s Position is no

longer a reliable indicator of where the layer is in world space. For

example, if you want another layer to track a layer that happens to be a

child, you need to translate the child layer’s position to world

coordinates. Another common application of layer space transforms allows

you to apply an effect to a 2D layer at a point that corresponds to

where a 3D layer appears in the comp view. Both of these applications

will be demonstrated in the following examples.

Effect Tracks Parented Layer

To start, consider a relatively simple example: You

have a layer named “star” that’s the child of another layer, and you

want to rotate the parent, causing the child to orbit the parent. You

have applied CC Particle Systems II to a comp-sized layer and you want

the Producer Position of the particle system to track the comp position

of the child layer. The expression you need to do all this is

L = thisComp.layer("star");

L.toComp(L.transform.anchorPoint)

The first line is a little trick I like to use to make the following lines shorter and easier to manage. It creates a variable L

and sets it equal to the layer whose position needs to be translated.

It’s important to note that you can use variables to represent more than

just numbers. In this case the variable is representing a layer object.

So now, when you want to reference a property or attribute of the

target layer, instead of having to prefix it with thisComp.layer("star"), you can just use L.

In the second line the toComp() layer space transform translates the target layer’s anchor point from the layer’s own

space to comp space. The transform uses the anchor point because it

represents the layer’s position in its own layer space. Another way to

think of this second line is “From the target layer’s own layer space,

convert the target layer’s anchor point into comp space coordinates.”

This simple expression can be used in many ways. For

example, if you want to simulate the look of 3D rays emanating from a 3D

shape layer, you can create a 3D null and make it the child of the

shape layer. You then position the null some distance behind the shape

layer. Then apply the CC Light Burst 2.5 effect to a comp-sized 2D layer

and apply this expression to the effect’s Center parameter:

L = thisComp.layer("source point");

L.toComp(L.anchorPoint)

(Notice that this is the same expression as in the

previous example, except for the name of the target layer: source point,

in this case). If you rotate the shape layer, or move a camera around,

the rays seem to be coming from the position of the null.

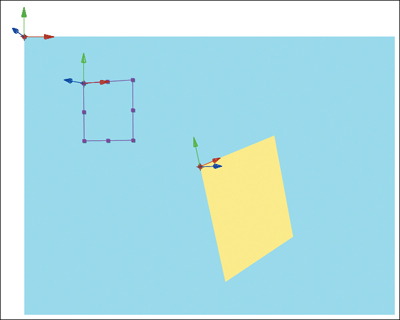

Apply 2D Layer as Decal onto 3D Layer

Sometimes you may need to use more than one layer

space transform in a single expression. For example, you might want to

apply a 2D layer like a decal to a 3D layer using the Corner Pin effect.

To pull this off you need a way to mark on the 3D layer where you want

the corners of the 2D layer to be pinned. Apply four point controls to

the 3D layer, and you can then position each of the 2D layer’s corners

individually on the surface of the 3D layer. To keep things simple,

rename each of the point controls to indicate the corner it represents,

making the upper-left one UL, the upper-right UR, and so on. Once the

point controls are in place, you can apply an expression like this one

for the upper-left parameter to each parameter of the 2D layer’s Corner

Pin effect:

L = thisComp.layer("target");

fromComp(L.toComp(L.effect("UL")("Point")))

The

first line is just the little shorthand trick so that you can reference

the target layer (the 3D layer in this case) more succinctly. The

second line translates the position of point controls from the 3D

layer’s space to the layer space of the 2D layer with the Corner Pin

effect. There are no layer-to-layer space transforms, however, so the

best you can do is transform twice: first from the 3D layer to comp

space and then from comp space to the 2D layer. (Remember to edit the

expression slightly for each of the other corner parameters so that it

references the corresponding point control on the 3D layer.)

So, inside the parentheses you convert the point

control from the 3D layer’s space into comp space. Then you convert that

result to the 2D layer’s space. Nothing to it, right?

Reduce Saturation Away from Camera

Let’s change gears a little. You want to create an

expression that reduces a layer’s saturation as it moves away from the

camera in a 3D scene. In addition, you want this expression to work even

if the target layer and the camera happen to be children of other

layers. You can accomplish this by applying the Color Balance (HLS)

effect to the target layer and applying this expression to the

Saturation parameter:

minDist = 900;

maxDist = 2000;

C = thisComp.activeCamera.toWorld([0,0,0]);

dist = length(toWorld(transform.anchorPoint), C);

ease(dist, minDist, maxDist, 0, -100)

The first two lines define variables that will be

used to set the boundaries of this effect. If the target layer’s

distance from the camera is less than minDist, you’ll leave the Saturation setting unchanged at 0. If the distance is greater than maxDist you want to completely desaturate the layer with a setting of –100.

The third line of the expression creates variable C,

which represents the position of the comp’s currently active camera in

world space. It’s important to note that cameras and lights don’t have

anchor points, so you have to convert a

specific location in the camera’s layer space. It turns out that, in

its own layer space, a camera’s location is represented by the array

[0,0,0] (that is, the X, Y, and Z coordinates are all 0).

The next line creates another variable, dist, which represents the distance between the camera and the anchor point of the target layer. You do this with the help of length(),

which takes two parameters and calculates the distance between them.

The first parameter is the world location of the target layer and the

second parameter is the world location of the camera, calculated

previously.

All that’s left to do is calculate the actual

Saturation value based on the layer’s current distance from the camera.

You do this with the help of ease(), one of the expression language’s amazingly useful interpolation methods. What this line basically says is “as the value of dist varies from minDist to maxDist, vary the output of ease() from 0 to –100.”

Interpolation Methods

After Effects provides some very handy global

interpolation methods for converting one set of values to another. Say

you wanted an Opacity expression that would fade in over half a second,

starting at the layer’s In point. This is very easily accomplished using

the linear() interpolation method:

linear(time, inPoint, inPoint + 0.5, 0, 100)

As you can see, linear() accepts five parameters (there is also a seldom-used version that accepts only three parameters), which are, in order:

input value that is driving the change

minimum input value

maximum input value

output value corresponding to the minimum input value

output value corresponding to the maximum input value

In the example, time is the input value

(first parameter), and as it varies from the layer’s In point (second

parameter) to 0.5 seconds beyond the In point (third parameter), the

output of linear() varies from 0 (fourth parameter) to 100 (fifth parameter). For values of the input parameter that are less than the minimum input value, the output of linear()

will be clamped at the value of the fourth parameter. Similarly, if the

value of the input parameter is greater than the maximum input value,

the output of linear() will be clamped to the value of the

fifth parameter. Back to the example, at times before the layer’s In

point the Opacity value will be held at 0. From the layer’s In point

until 0.5 seconds beyond the In point, the Opacity value ramps smoothly

from 0 to 100. For times beyond the In point + 0.5 seconds, the Opacity

value will be held at 100.

Close-up: Expression Controls

Expression controls are

actually layer effects whose main purpose is to allow you to attach user

interface controls to an expression. These controls come in six

versions: Slider Control Point Control Angle Control Checkbox Control Color Control Layer Control

All

types of controls (except Layer Control) can be keyframed and can

themselves accept expressions. The most common use, however, is to

enable you to set or change a value used in an expression calculation

without having to edit the code. For example, you might want to be able

to easily adjust the frequency and amplitude parameters of a wiggle()

expression. You could accomplish this by applying two slider controls

to the layer with the expression (Effects > Expression Controls).

It’s usually a good idea to give your controls descriptive names; say

you change the name of the first slider to frequency and the second one

to amplitude. You would then set up your expression like this (using the

pick whip to create the references the sliders would be smart): freq = effect("frequency")("Slider");

amp = effect("amplitude")("Slider");

wiggle(freq, amp)

Now, you can control

the frequency and amplitude of the wiggle via the sliders. With each of

the control types (again, with the exception of Layer Control) you can

edit the numeric value directly, or you set the value using the

control’s gadget. One unfortunate side note about

expression controls is that because you can’t apply effects to cameras

or lights, neither can you apply expression controls to them. |

Sometimes it helps to read it from left to right like

this: “As the value of time varies from the In point to 0.5 seconds

past the In point, vary the output from 0 to 100.”

The

second parameter should always be less than the third parameter.

Failure to set it up this way can result in some bizarre behavior.

Note that the output values need not be numbers.

Arrays work as well. If you want to slowly move a layer from the

composition’s upper-left corner to the lower-right corner over the time

between the layer’s In point and Out point, you could set it up like

this:

linear(time, inPoint, outPoint, [0,0], [thisComp.width, thisComp.height])

There are other equally useful interpolation methods in addition to linear(), each taking exactly the same set of parameters. easeIn() provides ease at the minimum value side of the interpolation, easeOut() provides it at the maximum value side, and ease() provides it at both. So if you wanted the previous example to ease in and out of the motion, you could do it like this:

ease(time, inPoint, outPoint, [0,0], [thisComp.width,

thisComp.height])

Fade While Moving Away from Camera

Just as you can reduce a layer’s saturation as it

moves away from the camera, you can reduce Opacity. The expression is,

in fact, quite similar:

minDist = 900;

maxDist = 2000;

C = thisComp.activeCamera.toWorld([0,0,0]);

dist = length(toWorld(transform.anchorPoint), C);

ease(dist, minDist, maxDist, 100, 0)

The only differences between this expression and the previous one are the fourth and fifth parameters of the ease() statement. In this case, as the distance increases from 900 to 2000, the opacity fades from 100% to 0%.

From Comp Space to Layer Surface

There’s a somewhat obscure layer space transform that you haven’t looked at yet, namely fromCompToSurface().

This translates a location from the current comp view to the location

on a 3D layer’s surface that lines up with that point (from the camera’s

perspective). When would that be useful?

Close-up: More About sampleImage()

You can sample the color and alpha data of a rectangular area of a layer using the layer method sampleImage(). You supply up to four parameters to sampleImage()

and it returns color and alpha data as a four-element array (red,

green, blue, alpha), where the values have been normalized so that they

fall between 0.0 and 1.0. The four parameters are sample point sample radius post-effect flag sample time

The

sample point is given in layer space coordinates, where [0, 0]

represents the center of the layer’s top left pixel. The sample radius

is a two-element array (x radius, y radius) that specifies the

horizontal and vertical distance from the sample point to the edges of

the rectangular area being sampled. To sample a single pixel, you would

set this value to [0.5, 0.5], half a pixel in each direction from the

center of the pixel at the sample point. The post-effect flag is

optional (its default value is true if you omit it) and specifies

whether you want the sample to be taken after masks and effects are

applied to the layer (true) or before (false). The sample time parameter

specifies the time at which the sample is to be taken. This parameter

is also optional (the default value is the current composition time),

but if you include it, you must also include the post-effect flag

parameter. As an example, here’s how you could sample the red value of

the pixel at a layer’s center, after any effects and masks have been

applied, at a time one second prior to the current composition time: mySample = sampleImage([width/

height]/2, [0.5,0.5], true, time

– 1);

myRedSample = mySample[0];

|

Imagine you have a 2D comp-sized layer named Beam, to

which you have applied the Beam Effect. You want a Lens Flare effect on

a 3D layer to line up with the ending point of the Beam effect on the

2D layer. You can do it by applying this expression to the Flare Center

parameter of the Lens Flare effect on the 3D layer:

beamPos = thisComp.layer("beam").effect("Beam")

("Ending Point");

fromCompToSurface(beamPos)

First, store the location of the ending point of the Beam effect into the variable beamPos.

Now you can take a couple of shortcuts because of the way things are

set up. First, the Ending Point parameter is already represented as a

location in the Beam layer’s space. Second, because the Beam layer is a

comp-sized layer that hasn’t been moved or scaled, its layer space will

correspond exactly to the Camera view (which is the same as comp space).

Therefore, you can assume that the ending point is already represented

in comp space. If the Beam layer were a different size than the comp,

located somewhere other than the comp’s center, or scaled, you couldn’t

get away with this. You would have to convert the ending point from

Beam’s layer space to comp space.

Now all you have to do is translate the beamPos

variable from comp space to the corresponding point of the surface of

the layer with Lens Flare, which is accomplished easily with fromCompToSurface().